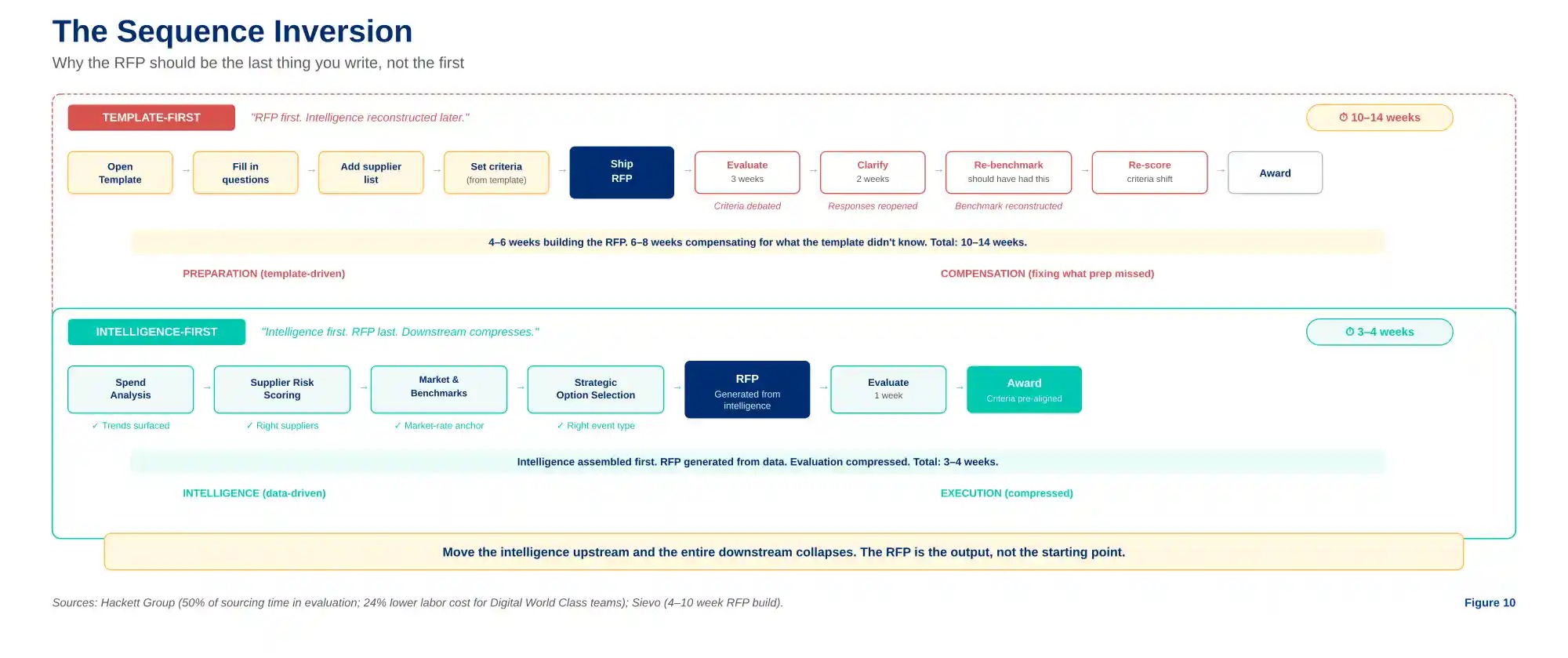

The RFP should be the last thing you write, not the first. Here’s what happens when sourcing teams invert the sequence.

TL;DR

- Half of all strategic sourcing time is consumed after the RFP ships — in evaluation, clarification, and negotiation. The drag isn’t in that phase. It’s in what came before it.

- Template-first RFPs inherit last year’s assumptions: last year’s questions, supplier lists, risk profiles, and market conditions. Two-thirds of average RFP content is recycled from prior events.

- One in eight RFPs receives a single response. Suppliers decline when the RFP signals the buyer hasn’t done their homework — and each missing bidder narrows the price spread by 3%.

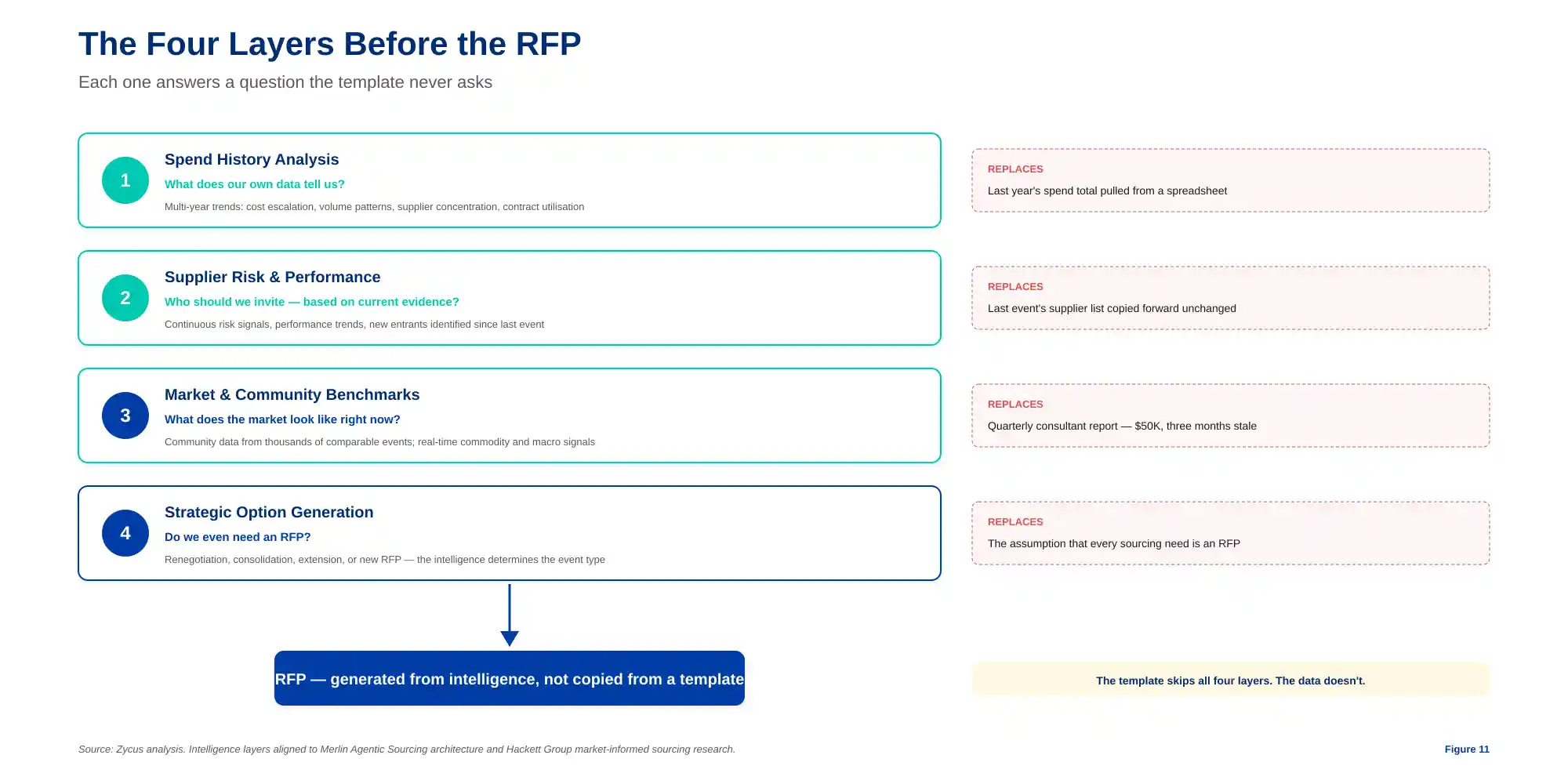

- Intelligence-first sourcing consults four layers before any document is drafted: spend history, supplier risk scores, market benchmarks, and community data. The RFP becomes an output of analysis, not the starting point.

- Sometimes the intelligence reveals the answer isn’t an RFP at all — it’s a renegotiation, a consolidation, or an extension. The template never tells you that. The data does.

- The best RFP is the one your data wrote. The worst is the one you copied from last year.

Half of all strategic sourcing time is consumed after the RFP ships — in evaluation, clarification, and negotiation. Scoring criteria get debated. Supplier responses get reopened. Benchmarks get reconstructed mid-process because nobody had them at the start. Most teams try to fix this phase. The faster teams fixed what came before it. They stopped writing the RFP first.

That claim sounds backwards. The RFP is the starting gun of every sourcing event. But that is exactly the problem. When the RFP is the first thing written, it inherits every assumption the team carried in from the last event: last year’s questions, last year’s supplier list, last year’s risk profile, last year’s market conditions. The document that shapes the entire downstream process — who gets invited, what gets asked, how bids get scored — is built on stale intelligence. And every phase that follows pays the tax. The evaluation takes longer because the criteria were wrong. The negotiation stalls because the benchmark was outdated. The award slips because the supplier shortlist was inherited rather than earned.

Where Evaluation Drag Actually Comes From

The symptoms are familiar. A cross-functional evaluation committee of eight to twelve stakeholders with no pre-agreed scoring rubric. Supplier clarification cycles that add two weeks because the RFP asked the wrong questions. Award recommendations that slide into the next quarter because the benchmark used to set the target price was three months old when the event opened. Each of these feels like an evaluation problem. All of them are preparation problems.

The pattern is consistent across industries. The average strategic RFP takes four to ten weeks to assemble before a single supplier sees it. During those weeks, the team is gathering spend data from one system, pulling contract terms from another, chasing stakeholder input through email, and formatting evaluation criteria from a template that was written for a different category in a different year. By the time the RFP ships, the market snapshot it reflects is already outdated — and the evaluation phase will spend weeks rediscovering what the preparation phase should have established.

The Template is a Time Capsule

Loopio and APMP’s 2026 benchmark, built from more than 1,500 response teams, found that teams with active content libraries reuse 66% of their content across events. If the response side operates this way, the buy side is no different — every RFP template carried forward into a new event imports the assumptions of the last one: last year’s questions, last year’s supplier list, last year’s risk profile, last year’s market conditions. The questions, the weighting, the supplier assumptions, the risk criteria — all of it carried forward without being stress-tested against current conditions.

The consequence shows up in supplier behaviour. One in eight RFPs receives only a single submission — not because the category lacks suppliers, but because the RFP itself signals that the buyer hasn’t done their homework. Vague scopes, irrelevant questions, compressed timelines, and requirements that don’t reflect the current market tell qualified suppliers that competing will not be worth the cost of responding. Each additional supplier submission widens the price spread by roughly 3%. When the pool collapses to one or two responses, the buyer has lost leverage before negotiation even begins — and the root cause was an RFP shaped by a template rather than by the market.

Figure 1 — The sequence inversion: move intelligence upstream, and the entire downstream compresses.

What Intelligence-First Sourcing Actually Looks Like

The inversion is structural, not cosmetic. Before any document is drafted, four intelligence layers are consulted — each one answering a question the template never asks.

Figure 2 — The four layers before the RFP: each answers a question the template never asks.

The buying organization’s own spend history is analyzed first — not last year’s total, but multi-year trend data: cost escalation rates, volume patterns, supplier concentration, contract utilization. This is the step where buried cost drift surfaces — price escalations compounding across invoicing streams that no single contract review would catch, volume shifts that changed the category’s economics without anyone noticing, supplier concentration that crept from manageable to risky. No template would have surfaced these patterns. The data does.

The supplier landscape is scored in real time. Not the annual review from six months ago — continuous risk and performance signals that reveal which suppliers are improving, which are deteriorating, and which new entrants have emerged since the last event. The question shifts from “who did we invite last time?” to “who should we invite based on current evidence?”

Market benchmarks are consulted before the target is set. This is the step that has changed most dramatically. Five years ago, a category benchmark from a consulting firm cost $50,000 and arrived quarterly, already three months old by the time a sourcing team could use it. Today, community data from thousands of comparable sourcing events across similar industries and categories gives the team a market-rate anchor before the first bid arrives. The information scarcity that justified starting with a template no longer exists. Spend Matters’ research on market-informed sourcing is direct: buyers who go to market without benchmarks routinely leave money on the table and extend their cycles because intelligence not gathered upfront has to be reconstructed during evaluation.

And critically, the intelligence determines whether the event should be an RFP at all. Sometimes a renegotiation is the better move. Sometimes a supplier consolidation. Sometimes an extension at improved terms. The RFP is one possible output of the intelligence layer — not the default. When the answer is an RFP, it writes itself — because the criteria, the supplier list, and the target outcomes have already been determined by data. Hackett Group research finds that Digital World Class procurement teams operate with 24% lower labor cost than typical peers, not by working faster, but by eliminating the preparation gap that template-first processes create.

What Compresses When the Sequence Inverts

The downstream effects are measurable. When evaluation criteria are shaped by current intelligence rather than inherited from a template, the scoring debate that used to take three weeks takes one — because the criteria were aligned before the bids arrived. When supplier shortlists are built from real-time risk and performance data, the clarification cycles that used to add two weeks disappear — because the right suppliers were invited. When the benchmark target reflects community and market data rather than a consultant’s quarterly estimate, the negotiation doesn’t stall on price discovery — because both sides already know the market.

The same team that ran twelve strategic events a year runs forty or fifty. Not by hiring more people. Not by working longer hours. By stopping the practice of writing the most consequential document in the sourcing cycle first — before any intelligence has been gathered — and then spending the rest of the process compensating for that decision. The preparation gap is not a minor inefficiency. It is the single largest controllable variable in the sourcing cycle time. Close it, and everything downstream accelerates.

The direction is consistent with what Hackett Group’s research confirms: 80% of procurement executives now identify AI as transformational for the function, yet only 12% have deployed it at scale — the gap is not ambition but architecture. Platforms built for this inversion — Zycus’s Merlin Agentic Sourcing among them, the sourcing entry point into the broader Intake-to-Outcomes architecture — assemble the intelligence layer autonomously: spend analysis, supplier risk scoring, community benchmarks from thousands of events, and real-time market signals. The RFP is generated from that intelligence. The agent does the preparation that used to take weeks. The category manager directs the strategy. And the evaluation phase — the phase where half of all sourcing time used to disappear — compresses to what it always should have been: a decision, not a reconstruction.

The best RFP is the one your data wrote. The worst is the one you copied from last year.

Related Reads:

- RFI, RFQ, and RFP Explained: A Practical Guide [2026]

- RFP Automation for Mid-Market Procurement: Smarter Proposal Evaluation with AI

- Improving Decision-Making with AI-Powered RFP Scoring Systems

- How to Write an Effective RFP for Autonomous Sourcing

- Why Sourcing Is the Highest-ROI Entry Point for Agentic AI in S2P